Anthropic has just released a new flagship AI model, but the most important thing about it is what it’s not.

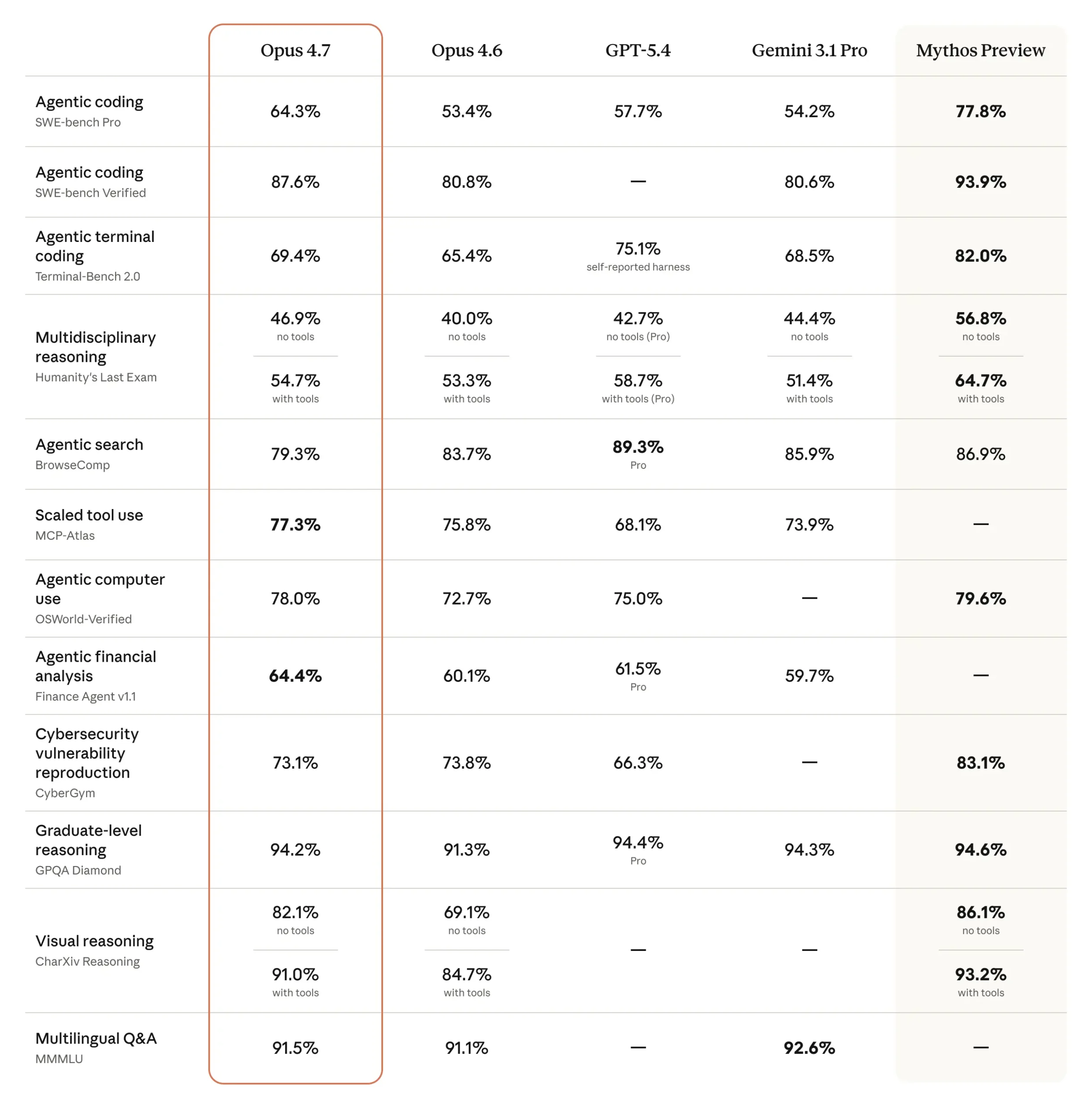

Claude Opus 4.7, is now its most capable model available to the public, delivering major improvements in coding, reasoning, and real-world task execution yet it deliberately stops short of the far more powerful technology Anthropic has already built behind the scenes.

That hidden system is Mythos, a model so advanced particularly in cybersecurity that it remains restricted to a small group of trusted partners, including major tech firms and financial institutions.

And that contrast is the real story.

Opus 4.7 is not just an upgrade. It’s a carefully controlled bridge between today’s usable AI and a future that even its creators are not yet ready to fully release.

On the surface, Opus 4.7 looks like what you’d expect from a next-generation model. It improves on its predecessor, Opus 4.6, across key areas like software engineering, instruction-following, document generation, and complex reasoning tasks essentially making it more useful for everyday enterprise and developer workflows.

It’s also widely accessible, available through Anthropic’s Claude platform, APIs, and major cloud providers, signalling that the company still intends to compete directly in the mainstream AI market.

But unlike many recent AI releases, Opus 4.7 has been designed as much around what it cannot do as what it can.

Anthropic has embedded safeguards that actively detect and block high-risk cybersecurity use cases particularly anything that resembles vulnerability discovery or exploit development.

That’s not a coincidence. It’s a direct response to what the company has already seen in its more advanced systems.

Because Mythos the model that sits beyond Opus 4.7 operates in a very different league.

In testing, Mythos has demonstrated the ability to identify software vulnerabilities at scale, analyse complex systems, and even simulate multi-step cyberattacks by chaining weaknesses together.

That level of capability is precisely why it hasn’t been released publicly.

Instead, Anthropic is running it through tightly controlled programs like Project Glasswing, where select organizations can use it to strengthen defenses while regulators and governments assess the broader risks.

The implication is clear: AI has crossed into territory where its capabilities are no longer just commercially valuable but they are strategically sensitive.

And Opus 4.7 is Anthropic’s attempt to navigate that reality.

Rather than holding everything back, the company is releasing a version of its technology that is powerful enough to be useful, but constrained enough to be considered safe at least for now.

This reflects a broader shift happening across the AI industry.

For years, progress was measured almost entirely by capability, how much smarter, faster, or more accurate models could become. Now, companies are increasingly being forced to balance capability with containment, especially in domains like cybersecurity where the same tools can be used for both defense and attack.

Anthropic has taken a particularly cautious stance.

Its internal frameworks, including its Responsible Scaling Policy, are designed to evaluate whether models approach thresholds of “catastrophic risk” before they are released, a signal that the company sees advanced AI less as software and more as a potentially sensitive technology requiring staged deployment.

Opus 4.7 fits neatly into that framework.

It allows Anthropic to gather real-world data, test its safety mechanisms, and refine how users interact with powerful systems — all while keeping its most advanced capabilities under tight control.

But this strategy also raises questions.

Some critics argue that holding back models like Mythos could be as much about competitive positioning as safety, especially in a market where perception matters as much as performance. Others point out that even limited releases can still shift the threat landscape, particularly if attackers begin to gain access to similar tools.

Either way, the direction is unmistakable.

AI is no longer just a race to build the most powerful model.

It’s a race to figure out how and whether those models should be released at all.

And with Opus 4.7, Anthropic is sending a clear signal:The future of AI won’t just be defined by what it can do.

Discover more from TechBooky

Subscribe to get the latest posts sent to your email.