Meta’s revamped AI push is driving new interest in its standalone Meta AI app and dragging a quiet “growth hack” back into the spotlight: your Instagram friends can be told you’re using it, whether you like it or not.

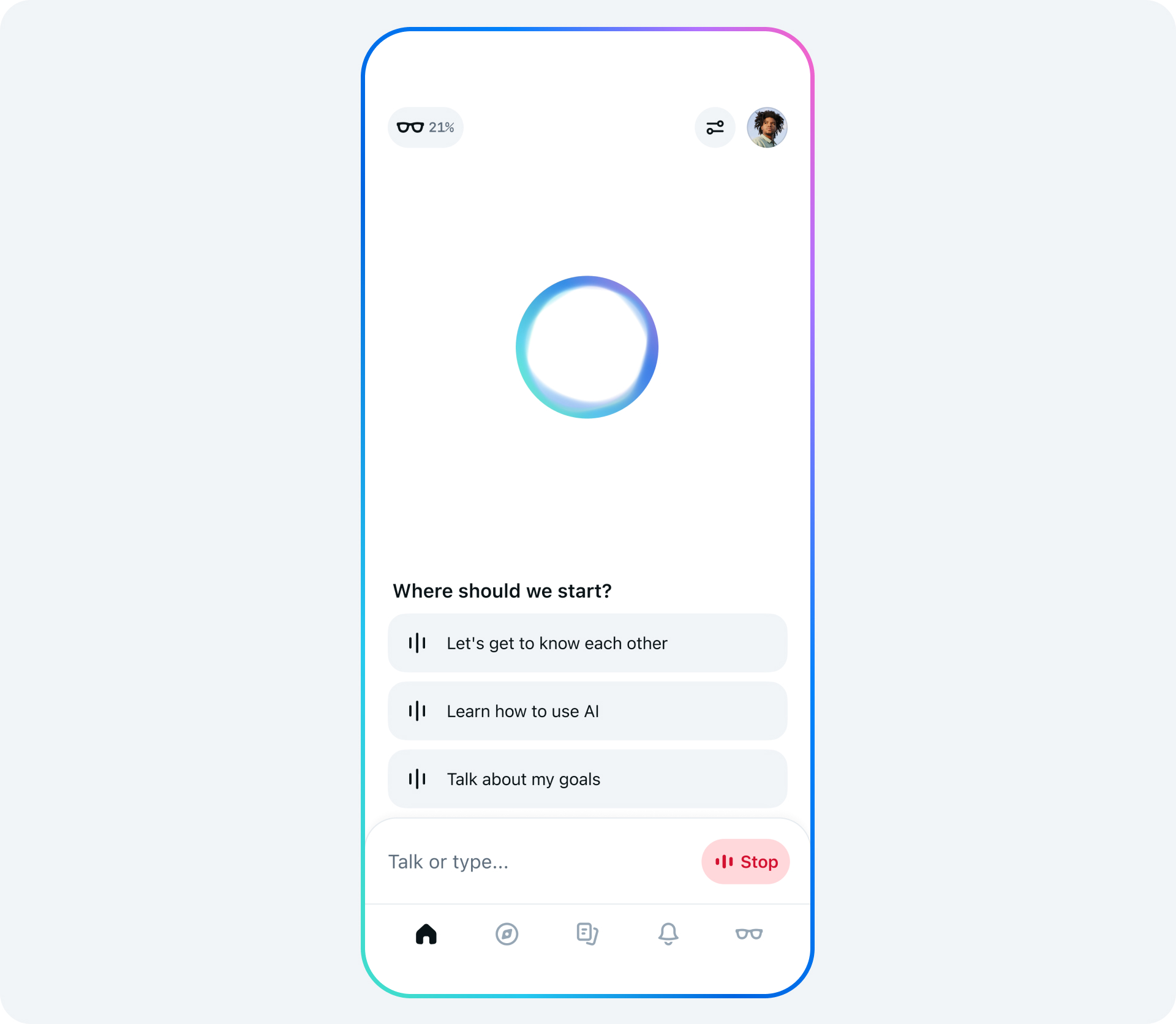

Following the release of Meta’s new Muse Spark AI model this week, downloads of the Meta AI app have spiked. Market intelligence firm Appfigures says the app has climbed from No. 57 to No. 5 on the U.S. App Store after the chatbot overhaul. That attention is also resurfacing a design choice that some users are finding uncomfortable: Instagram can send notifications to your contacts that you’ve joined the Meta AI app, without any explicit opt-in prompt.

The Meta AI app itself isn’t new. It launched in April last year, and early adoption was modest by Meta standards. In its first month and a half on the App Store, it saw 6.5 million downloads, according to Appfigures a large number in general, but small for a company whose apps reach an estimated 42% of the world’s population daily.

To boost growth, Meta began using Instagram to promote the app. Users report that Instagram generates notifications highlighting which of their friends are on Meta AI, presented as prominently as alerts for new followers. Those alerts arrive without the Meta AI app asking for permission to broadcast that you’ve joined.

That means downloading the app can trigger a cascade of messages from friends and acquaintances who suddenly see your usage surface in their Instagram notifications. The experience is socially awkward for some, but it also points to how tightly Meta has linked its ecosystem together and how hard it is for users to track what information moves where.

Access to the Meta AI app requires a Meta account, and many people log in with the same account they’ve used since their Facebook sign-up days, already tied to Instagram and Facebook. Activity across these products feeds into Meta’s broader ad-targeting systems. That includes what you do in the Meta AI app itself. A user who discusses something like menstrual health with the chatbot could then see related ads, such as period products, on Instagram.

The app does not surface clear, in-context prompts asking if your usage can be shared with friends or if your chat content can be used as advertising data. Instead, those permissions are buried in the terms of service and privacy documentation that most users never read closely before tapping “agree.”

The notification behaviour isn’t the only Meta AI design decision to raise privacy and usability concerns. Last year, Meta briefly experimented with a Discover feed inside the app that surfaced public AI conversations. Users had to manually hit publish for a chat to appear there, but the interface proved confusing enough that people were inadvertently sharing sensitive information.

Observers, including venture capital partner Justine Moore, noted that the Discover feed was quickly filled with posts from older users who didn’t seem to realise their chats were being made public. Some of the shared conversations were trivial, like a user asking, “Why do some farts stink more than other farts?” Others contained highly personal details, including home addresses, medical information and intimate questions about relationships and marriage.

The combination of two dynamics made this particularly fraught. First, a significant portion of Meta’s user base is older and not always comfortable with newer interface patterns. Second, users often treat AI chatbots as private sounding boards for issues they consider too intimate or embarrassing to share with real people. When those assumptions collided with a public Discover feed, the result was a stream of unintentionally exposed personal data.

Meta has since removed the Discover feature from the Meta AI app, an implicit acknowledgement that the design was causing real-world privacy problems even if posting was technically “opt-in.” The episode underscores how easily design choices around sharing and defaults can lead to oversharing by less tech-savvy users.

The current app still has a “Vibes” feed, which surfaces AI-generated content, though the article does not detail how it works or what signals it uses. What is clear is that Meta continues to experiment with social and discovery layers on top of its AI product, blurring the line between what users think of as private chatbot interactions and what can become visible or leveraged elsewhere in Meta’s network.

All of this is happening as Meta is under pressure to prove its massive AI investments can succeed where its metaverse spending largely stalled. The launch of Muse Spark is part of a broader reset of the company’s AI efforts. The jump in Meta AI app rankings shows that strategy is at least generating short-term interest but it is also pulling more people into an ecosystem where their behaviour may be surfaced and monetised in ways they don’t fully anticipate.

For now, the practical takeaway is simple: installing the Meta AI app isn’t just a private experiment with a chatbot. It can show up in friends’ Instagram notifications, and your chats may inform the ads you see across Meta platforms. The company technically has consent through sprawling terms of service, but users who assume their AI conversations are invisible to their social graph or firewalled from ad systems are likely overestimating how much separation there really is.

Discover more from TechBooky

Subscribe to get the latest posts sent to your email.