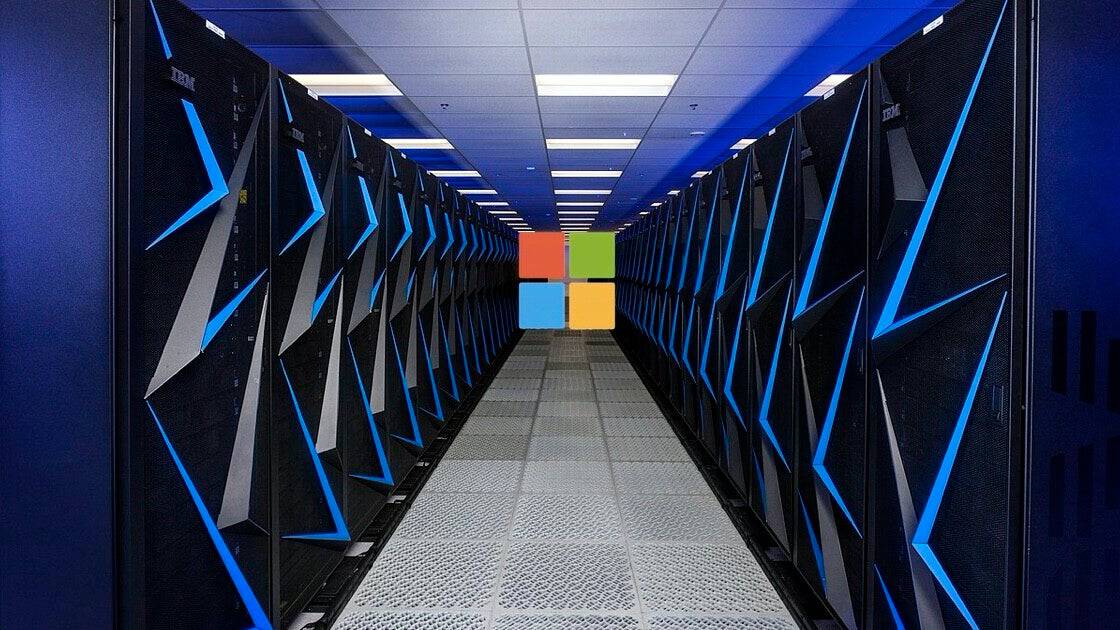

In a bid to power OpenAI’s ChatGPT chatbot, software giant -Microsoft has reportedly invested hundreds of millions of dollars in the creation of a massive supercomputer, according to a Bloomberg report. Microsoft released a blog post on Monday describing how it developed Azure’s advanced artificial intelligence infrastructure, which OpenAI uses, and how its systems are becoming even more efficient.

Microsoft claims to have connected thousands of Nvidia graphics processing units (GPUs) on its Azure cloud computing platform to produce the supercomputer that supports OpenAI’s initiatives. As a result, tools like ChatGPT and Bing’s AI capabilities were “unlocked” by OpenAI, enabling it to train ever-more powerful models.

According to a statement by Bloomberg, Scott Guthrie, Microsoft’s vice president of AI and cloud, claimed the company invested several hundreds of millions of dollars on the project. And while that might seem insignificant compared to Microsoft’s recent extension of its multiyear, multibillion-dollar investment in OpenAI, it does show that the company is prepared to invest even more money in the AI market.

With the launch of its new virtual machines that utilize Nvidia’s H100 and A100 Tensor Core GPUs as well as Quantum-2 InfiniBand networking, Microsoft is already working to increase the capability of Azure’s AI capabilities. Both companies teased the project last year. Microsoft claims that this should make it possible for OpenAI and other companies that depend on Azure to build more complex AI models with sophisticated abilities.

Eric Boyd, Microsoft’s corporate vice president of Azure AI, in a statement says that “We saw that we would need to build special-purpose clusters focusing on enabling large training workloads and OpenAI was one of the early proof points for that.” Boyd added, “We worked closely with them to learn what are the key things they were looking for as they built out their training environments and what were the key things they need.”

Discover more from TechBooky

Subscribe to get the latest posts sent to your email.